Scheduling of Neural Network Operations on Embedded Multi-core CPUs

Bearbeitet von H. Stotz.

Bachelor’s Thesis / Master’s Thesis / Student Research Project

Abstract

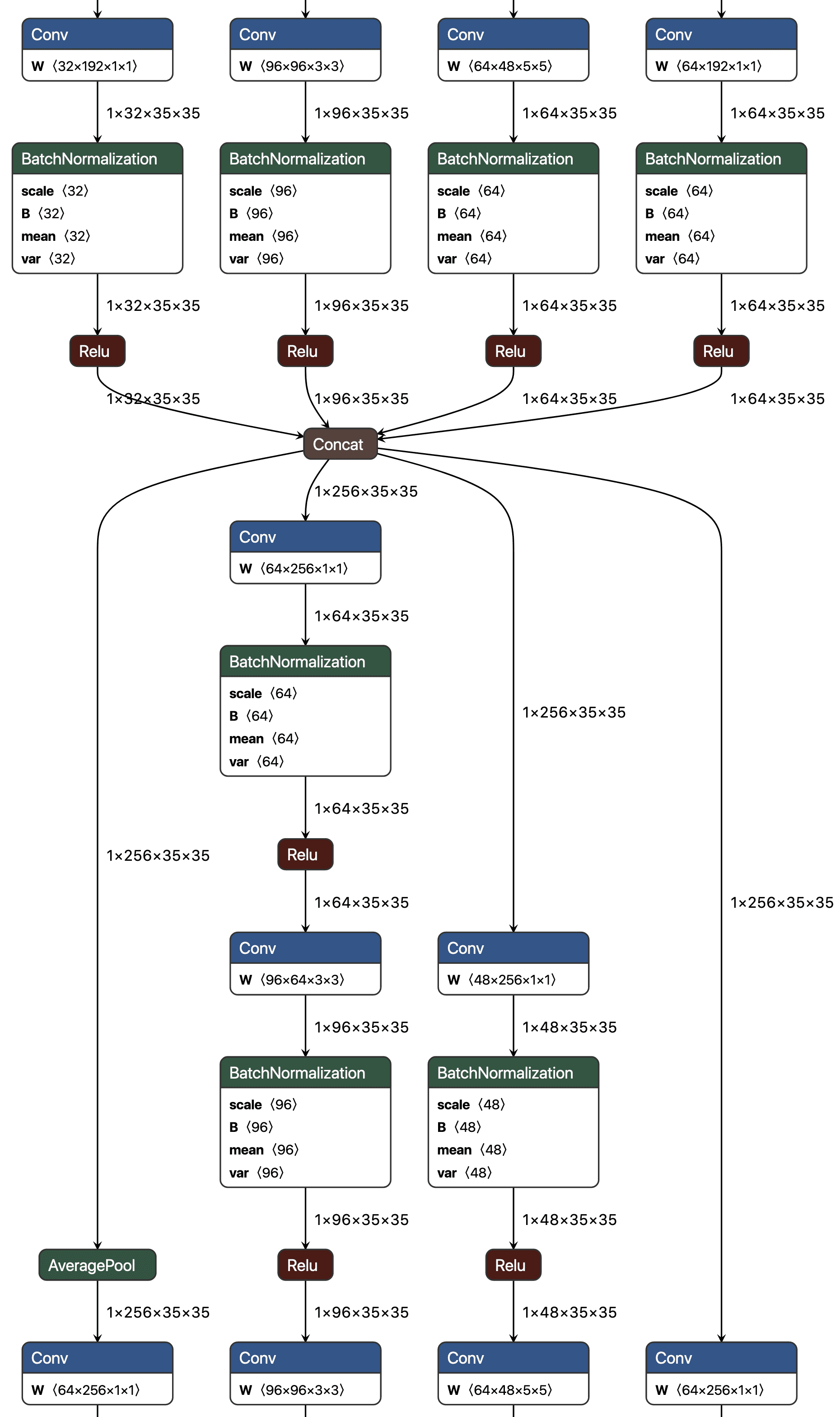

Inference of deep neural networks on embedded/edge devices is a very active area of research in academia and industry. Contemporary neural network architectures consist of multiple concurrent branches without data dependencies. Due to this fact, such neural networks are suitable for deployment on multi-core CPUs. An example of such a neural network is Inception v3:

In this student research project a scheduler for neural network operations on embedded multi-core CPUs should be implemented and integrated into our open source deep learning inference framework Pico-CNN [1] (https://github.com/ekut-es/pico-cnn). The implementation is going to be evaluated on a RaspberryPi-like embedded system.

This image was originally posted to Flickr by ghalfacree at https://flickr.com/photos/120586634@N05/39906369025 under the Creative Commons CC-BY-SA 2.0 license.

This image was originally posted to Flickr by ghalfacree at https://flickr.com/photos/120586634@N05/39906369025 under the Creative Commons CC-BY-SA 2.0 license.

Requirements

- C

- Python

- Linux (optional)

References

[1] K. Lübeck and O. Bringmann, “A Heterogeneous and Reconfigurable Embedded Architecture for Energy-Efficient Execution of Convolutional Neural Networks,” in Architecture of Computing Systems – ARCS 2019, pp. 267–280 (Copenhagen, Denmark).